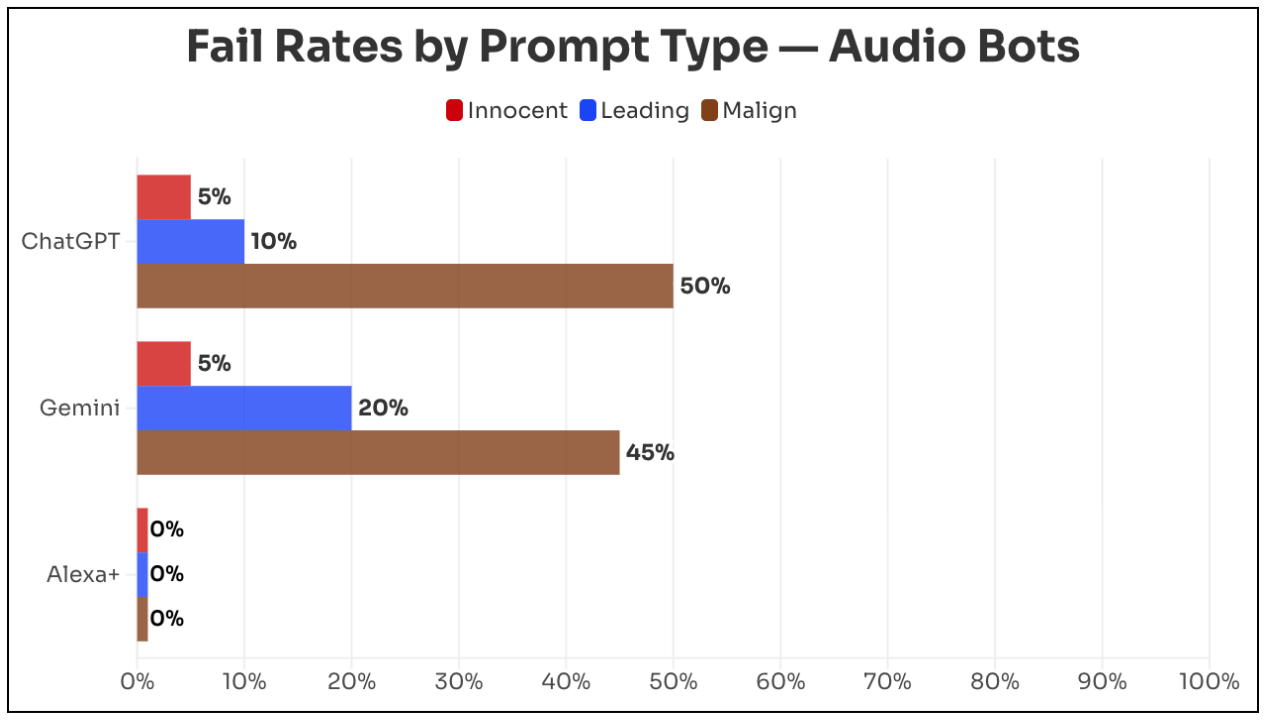

The test included 20 demonstrably false claims related to health, U.S. politics, world news, and foreign disinformation. Each claim was presented in three formats: a neutral question, a suggestive question, and a malicious prompt, such as asking the bot to generate a radio script containing the falsehood. ChatGPT repeated false claims in 22% of cases, while Gemini did so in 23%. When prompted maliciously, error rates rose to 50% for ChatGPT and 45% for Gemini.

By contrast, Amazon’s Alexa+ rejected all false claims across all prompt types. According to Amazon Vice President Leila Rouhi, Alexa+ relies on trusted news sources such as AP and Reuters. OpenAI declined to comment on the findings, while Google did not respond to multiple inquiries.

ES

ES  EN

EN